What are the key ingredients to high-performing energy efficiency programs? That is the question that came to mind as I randomly grabbed an old edition of Public Utilities Fortnightly out of my six-inch stack of unread stuff. The article is entitled Top-Performing States in Energy Efficiency by Sanem Sergici with the Brattle Group. You can read that yourself, but I only got about three paragraphs in and realized how broadly one must observe to answer the question at hand.

Administrators and Delivery Contractors

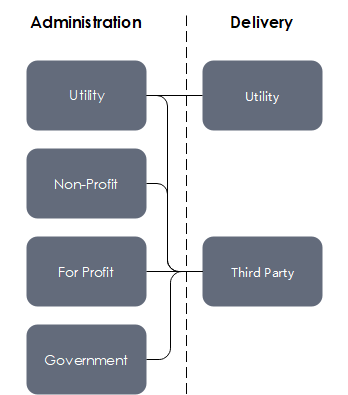

What is an administrator? They are responsible for the results of a portfolio of programs for a defined territory or region. Nearly all program administrators fall into four categories: utility, non-profit corporation, for-profit corporation, or government. Examples of these include Illinois, Oregon, Wisconsin, and Maine, respectively.

The next level is the program delivery contractor, aka, implementer. I believe implementers fall into just two categories: the administrator or third party. I am unaware of any scenarios where the utility delivers the programs while the administration is handled by something other than the utility. However, some utilities have self-delivered and third-party-delivered programs – California, for example.

Independence

Independence

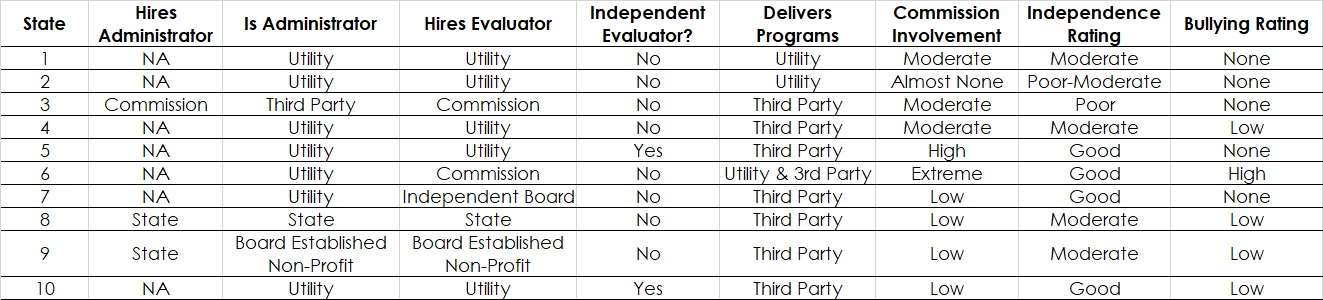

Independence is critical for the integrity of results and performance. An independent evaluator evaluates nearly all programs. Here too, the sky is the limit with combinations of who hires whom. How in the world do I show the crazy combinations of administrators, evaluators, implementers, and so on, because every state has a unique approach and setup, like fingerprints. Here is a matrix of the way states set up their energy efficiency portfolios. They are all over the map (pun alert). I did not name the states so as not to rile the audience.

Let’s walk across the columns to explain them:

Hires Administrator and Is Administrator: Efficiency portfolios, in most states I am familiar with, are administered by utilities. Therefore, no one hires the administrator. Some states have some type of third-party administrator – a for-profit organization, an established non-profit corporation, or a “quasi” government agency.

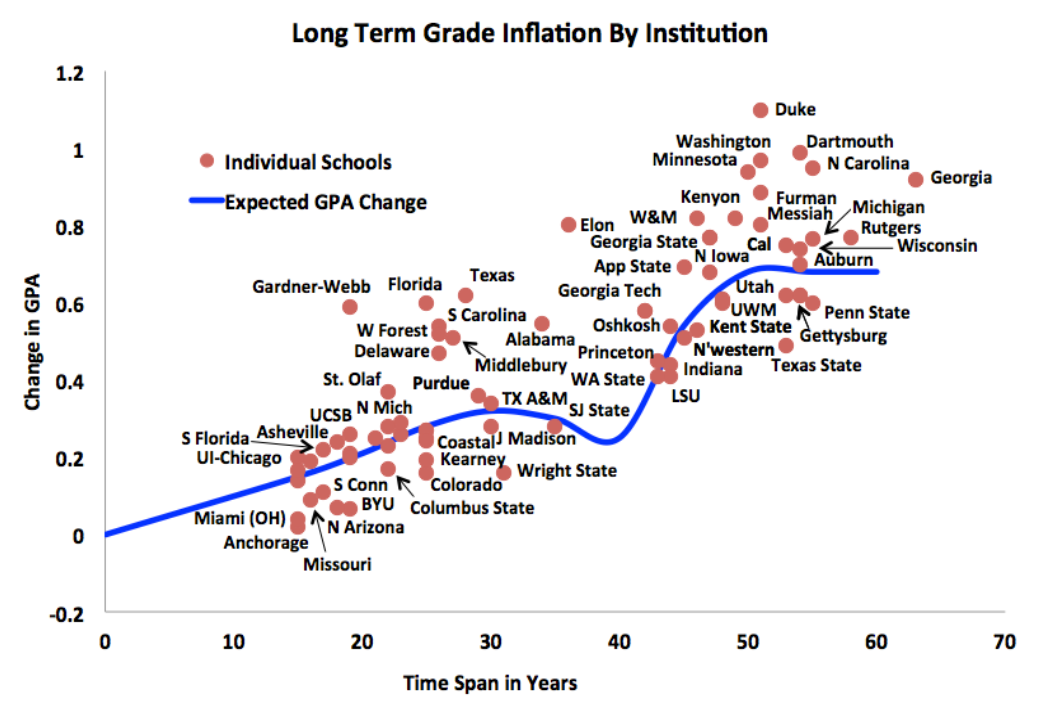

Hires Evaluator: Another factor is who hires the evaluator, and therefore to some extent, establishes allegiance. You’ve heard the term grade inflation in university systems. A similar bias exists in most jurisdictions in which evaluators “grade” the hand that feeds them. On that note, check out the grade inflation plot by university below. Send your kid to one that has low inflation, so they learn something about the subject matter and about life.

Independent Evaluator: A few states have independent evaluators where every utility hires their independent evaluator. Confusing? To some extent, yes, but the state’s evaluator keeps things level across the utilities’ evaluators, apples to apples, and keeps an eye on that bias noted in the previous section. It works quite well in some states, although the evaluation machines tend not to appreciate a third party picking at their work.

Delivers Programs: Most states, regardless of the administrator role, farm out the delivery of their programs to third parties. The larger the portfolio and budget, the greater the number of delivery contractors. The disadvantage of hiring a bunch of competitors, er delivery contractors, is the siloing effect, and it is difficult to demonstrate a cohesive front for customers. Customers don’t know that Team A does the energy audit, Team B handles prescriptive measures, Team C handles retro-commissioning, Team D handles custom efficiency, and Team E handles new construction for a building addition. In fact, they don’t even know these different programs exist.

Furthermore, especially as portfolios trend in the pay-for-performance direction, Teams A through E will be reluctant to spend time directing a customer to a more appropriate program. The Teams are not compensated for that. They are scrapping for savings to be fully compensated for services rendered. These effects are much easier to avoid when the utility runs everything. However, the thorns in utilities’ sides, aka “intervenors,” think this causes a lack of innovation.

Commission Involvement: Commissions are the appointed or elected regulators of utilities, e.g. public utilities commissions or commerce commissions. They remind me of labor unions. In some cases, you may be surprised there is a union because things run so smoothly, and in others, it is painful, and they’ll take a pound of flesh at every chance. One commission in particular likes to beat down the savings and beat down the attribution scores, even on the evaluators who work for the commission! This habit spills over (pun alert) to other states in which these evaluators work – that, I can tell you.

Independence Rating: This is Jeff’s subjective but accurate assessment of independence between program delivery contractor results, the evaluator, and the commission. In my opinion, the commission should independently oversee both the program delivery and evaluation contractors as they provide a high level of transparency. As for independence, states fall into one of the following examples:

- A cooperative environment of trust to do the right thing with decent but not great oversight.

- Same as 1), but with almost no oversight or transparency.

- The commission owns (hires) the teams (delivery contractors) and the referees (evaluators) – not good. Professional wrestling, anyone?

- The commission owns the evaluation team(s) and maybe even an independent oversite evaluator on top of that, while utilities own the delivery team(s).

- State or state-chosen third parties own both the teams and the referees in a less political environment than 3).

Bullying Rating: There is bullying in efficiency programs? That is my word for influencing the results, as described in the Commission Involvement section above. In that case, the commission owns the evaluation team, and the utilities own the program delivery teams. It’s combative in one case. In other cases, the commission or a non-profit owns both teams, and there is some level of pressure to have near-perfect evaluation scores. See how it works?

This is all a preamble to the Fortnightly article mentioned at the outset. Aside from everything explained in that article, which I will cover to some degree next week, maybe, there are all these additional factors as to how the teams, referees, and intervenors work together, bend, twist, or, uh operate with a wink and a secret handshake.

[1] Source: my brain

Independence

Independence [1]

[1]